There’s a small but telling detail in how a new generation of AI-native code editors describe themselves: not as coding tools with AI features bolted on, but as editors built for the age of AI from the ground up. The architecture, the interface, the assumptions about how developers work — all of it designed with AI as a first-class citizen, not a layer applied on top.

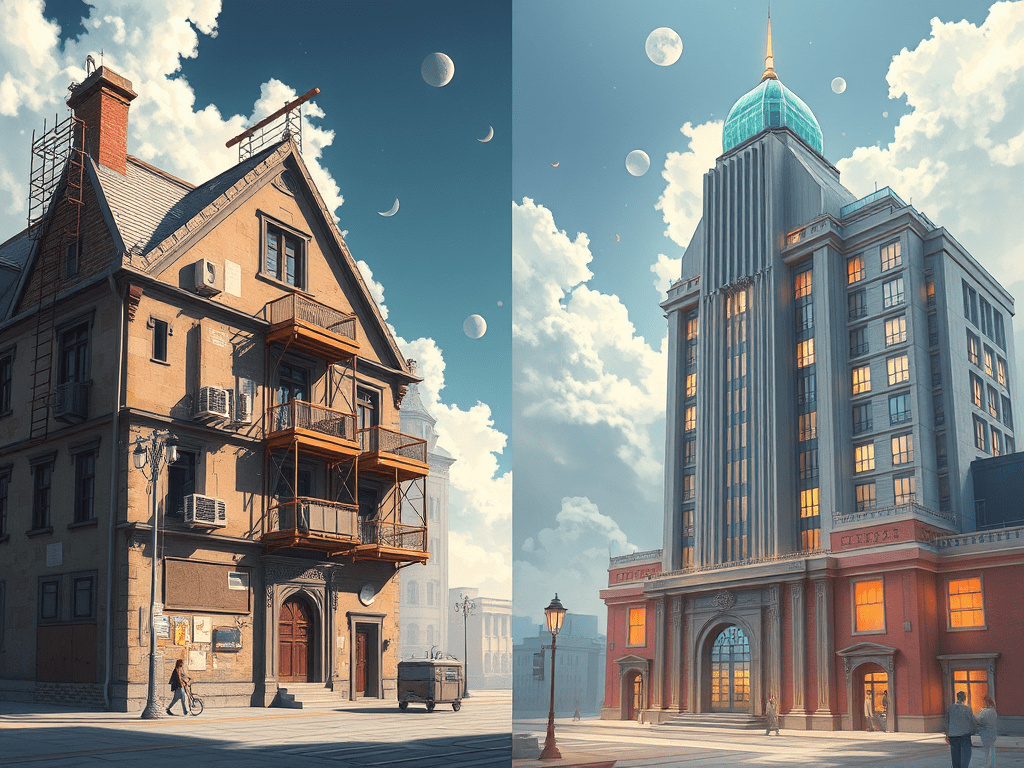

The incumbents in this space, by contrast, retrofitted AI onto existing products with enormous installed bases and partnership agreements that constrained which foundation models they could use. The AI-native challengers stayed model-agnostic, adopted the best frontier models the moment they launched, and shipped features faster than teams ten times their size. Small teams of dozens taking meaningful market share from some of the most powerful software incumbents on the planet.

That’s the AI-native startup story in miniature. And it’s repeating across categories.

What “AI-native” actually means structurally

The phrase risks becoming meaningless through overuse, so it’s worth being precise about what distinguishes an AI-native startup architecturally from a company that has added AI capabilities.

Traditional software is built around a core workflow — the thing the application does — with data and intelligence layered in over time. AI-native startups invert that assumption. They start with access to capable foundation models as a given, build on top of AI APIs rather than building the models themselves, and design their entire product logic around the assumption that reasoning, generation, and automation are cheap and available from day one.

The implication isn’t just technical. It changes the team structure, the cost base, the speed of iteration, and fundamentally what’s possible with a small headcount. Tasks that previously required entire departments — content generation, data synthesis, customer interaction, code review — become tractable for a founding team of five.

The cloud analogy is apt, but incomplete

The comparison to cloud computing is one the venture community reaches for frequently, and it holds up reasonably well. Cloud didn’t just make existing software cheaper to run — it enabled entirely new business models that would have been impossible or economically unviable in an on-premise world. Dropbox, Slack, Stripe: none of these are simply “traditional software moved to the cloud.” They were products whose core value propositions required cloud architecture to exist.

The pattern worth noting with AI-native startups is similar, but the timeline is compressed. Cloud’s transformation of the startup landscape played out over roughly a decade. AI’s equivalent shift appears to be moving faster — partly because the infrastructure matured quickly, and partly because the productivity leverage is more immediate and visible.

Clay is a useful example here. Its value proposition — hyper-personalised sales outreach, enriched with data from dozens of sources, generated at scale — simply doesn’t exist in a pre-AI world. It’s not a better version of a spreadsheet. It’s a product category that AI made possible. The same is true of Sierra in customer service, Rillet in finance automation, and a growing cohort of vertical-specific AI-first applications carving out meaningful share in categories previously assumed to be incumbent-proof.

Why incumbents are finding this harder than expected

The Menlo Ventures 2024 State of Generative AI report observed something that initially seemed counterintuitive: AI-native startups were winning market share from incumbents who had every structural advantage — distribution, data moats, enterprise relationships, capital. The report’s framing: incumbents are constrained by their existing architectures, partner agreements, and the organisational weight of their legacy businesses.

The product velocity gap is real. An AI-native team with ten engineers can ship a feature in a week that a large incumbent would take a quarter to plan and another quarter to build. The absence of technical debt, legacy integrations, and organisational politics turns out to be a genuine competitive asset rather than just the romantic story startups tell about themselves.

This connects directly to the capital concentration dynamics explored earlier — the barbell is widening, but not in the way the original post anticipated. It isn’t just large models versus niche applications. It’s increasingly large incumbents versus small AI-native teams who’ve found the gap between what incumbents can ship and what the market wants, and built directly into it.

The questions that don’t have clean answers yet

The PMF instability discussion from a few posts back applies here with particular force. AI-native startups are winning fast — but the same architectural agility that lets them outpace incumbents also makes them vulnerable to the next wave of AI-native competitors. The advantage one team built by moving faster and staying model-agnostic could, in theory, be replicated by the next team with access to a better model and a cleaner product intuition.

The open question — and it’s a genuinely interesting one — is what durable advantage looks like for an AI-native startup beyond the initial velocity window. Data flywheels, workflow lock-in, proprietary fine-tuning, deep vertical integration: these are the moats being built right now, and which of them actually hold is one of the more consequential experiments playing out in the venture ecosystem.

The cloud analogy is instructive one more time: the first wave of cloud-native startups produced some of the most durable software businesses of the last twenty years. It also produced a long list of companies that moved fast, raised well, and were outcompeted by the next architectural shift before the moat was dug.

Which wave is this — and how deep are the foundations being laid?

Looking at the AI-native companies you’re watching — what do you think their durable advantage actually is, beyond being faster than incumbents right now?

Let’s keep learning — together

Share your thoughts