Here is a quiet productivity problem hiding in plain sight across most enterprises: employees lose nearly a full workday every week just trying to find the right information. Not analysing it. Not acting on it. Finding it.

The culprit, more often than not, is keyword search. A technology that’s deceptively simple — and deceptively limited.

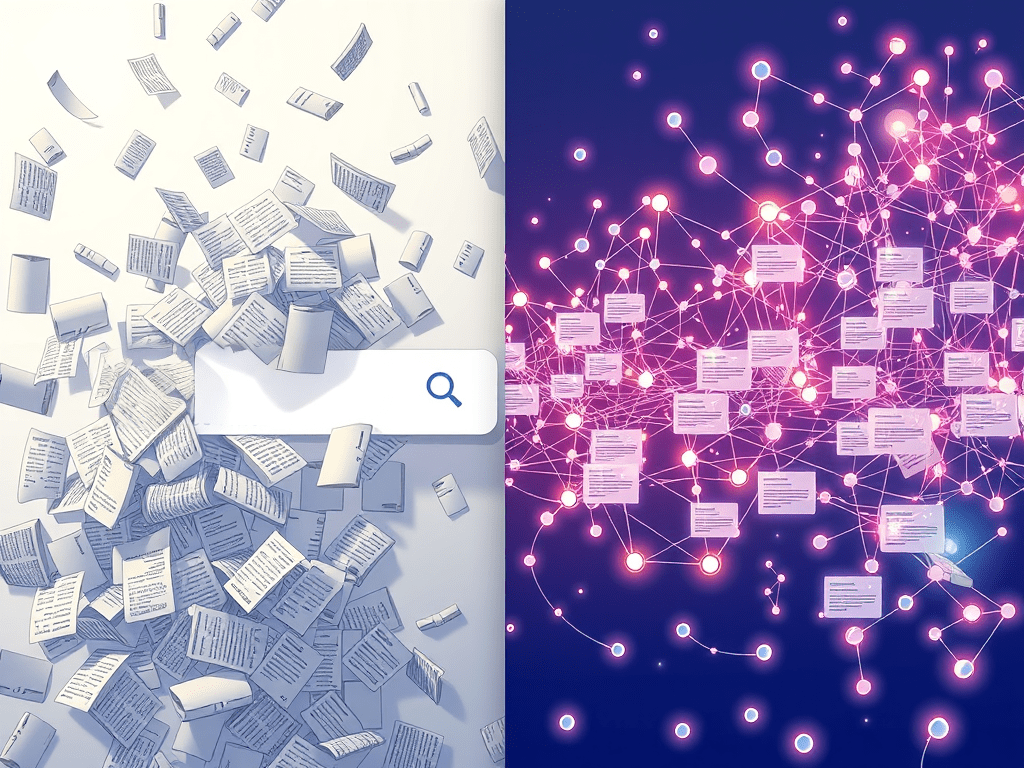

The fundamental problem with matching strings

Keyword search does one thing: it looks for the words you typed. Type “customer onboarding issues” and it returns documents containing those exact words. Type “client setup problems” — meaning the same thing — and you get different results, or none at all.

This isn’t a bug. It’s the architecture. Keyword search has no concept of meaning, context, or intent. It pattern-matches strings. The system doesn’t know that “automobile” and “car” are the same thing, that “fix” and “resolve” are synonyms in a support context, or that a question about “running slow” might refer to application performance rather than a jogging problem.

For decades this was an acceptable limitation — the cost of search being fast and computationally cheap. What’s changed is that the constraint is now resolvable, and the productivity cost of leaving it in place is becoming harder to justify.

What semantic search actually does differently

Semantic search doesn’t match words. It matches meaning.

The underlying mechanism relies on representing text as mathematical vectors — numerical encodings where similar meanings cluster close together in high-dimensional space. When a user queries “how do I speed up the checkout process,” the system doesn’t look for those exact words. It finds documents whose meaning is most similar to the meaning of the query — which might include documents about payment flow optimisation, cart abandonment reduction, or performance bottlenecks, none of which contain the original phrase.

The practical upshot is that users find what they’re actually looking for even when they don’t know the exact terminology the organisation uses. A new employee unfamiliar with internal jargon gets the same relevant results as a ten-year veteran who knows every acronym. Context narrows the results appropriately — a search in a legal document management system understands “precedent” differently than the same word in an educational platform.

This is also the architectural thread connecting directly to the RAG discussion from a year ago and the AI stack’s missing layer. Retrieval-Augmented Generation — the pattern that keeps enterprise AI honest by grounding model responses in real organisational knowledge — depends entirely on semantic retrieval working well. If the retrieval layer returns poor results, the generative layer confidently hallucinates. The search problem and the AI accuracy problem turn out to be the same problem.

Where knowledge graphs fit

Vector search alone has a useful weakness worth understanding. It finds documents that are similar to the query — but similarity isn’t always the same as relevance. A vector search for “Apple earnings” might surface documents about fruit nutrition if the embedding model doesn’t have enough domain context to distinguish.

Knowledge graphs address the structural layer that vectors handle imperfectly. Rather than encoding meaning statistically, knowledge graphs encode relationships explicitly — Apple the company is connected to Tim Cook, to the iPhone product line, to Nasdaq, to quarterly reporting cycles. When a user searches for Apple’s financial performance, the graph knows which Apple and surfaces results accordingly.

The pattern gaining momentum in enterprise search is the hybrid stack: vector embeddings for semantic similarity, knowledge graphs for relational structure and reasoning, combined in what’s increasingly called GraphRAG. Legal firms using this architecture can search millions of case documents for conceptual precedents, surfacing relevant cases that share no keywords with the query. Healthcare systems can connect symptoms, diagnoses, treatments, and patient history through a unified semantic model rather than siloed text search.

Neo4j unveiled federated knowledge graph support with vector embedding integration in October. IBM’s watsonx.graph added AI-augmented schema discovery the same month. The infrastructure race for enterprise semantic search is actively underway.

The honest limitation

It’s worth noting that semantic search isn’t universally better than keyword search — it’s differently better.

For exact lookups — a specific contract number, a known SKU, a precise legal citation — keyword search remains faster and more precise. The query “invoice 2847-B” has a single correct answer, and semantic interpretation adds noise rather than value.

The organisations building the most effective search infrastructure are the ones implementing hybrid approaches: keyword matching for exact retrieval, semantic search for intent-based exploration, knowledge graphs for relational context. Most enterprise search platforms have spent the last two years building these hybrid capabilities as their core differentiation.

The employees who spend a workday a week hunting for information deserve all three layers working together.

What this means for knowledge management

The broader implication is one worth sitting with. Most enterprise knowledge management systems were built on the assumption that if you tagged documents correctly and organised them into the right folder structure, people would find things. That assumption was always optimistic. In practice, taxonomies drift, tagging is inconsistent, and the person who created the folder structure left three years ago.

Semantic search quietly makes a lot of that governance overhead less critical. The system finds the relevant document even if it’s miscategorised, because it’s searching for meaning rather than metadata. That doesn’t make data governance irrelevant — the data governance thread explored why good data foundations still matter enormously — but it shifts where the value of good governance is realised.

The search bar was never really searching. It was just matching. The distinction, it turns out, is worth an entire workday a week.

In your organisation, which use case would benefit most immediately from semantic search — internal knowledge retrieval, customer support, or something else entirely?

Let’s keep learning — together.

Share your thoughts